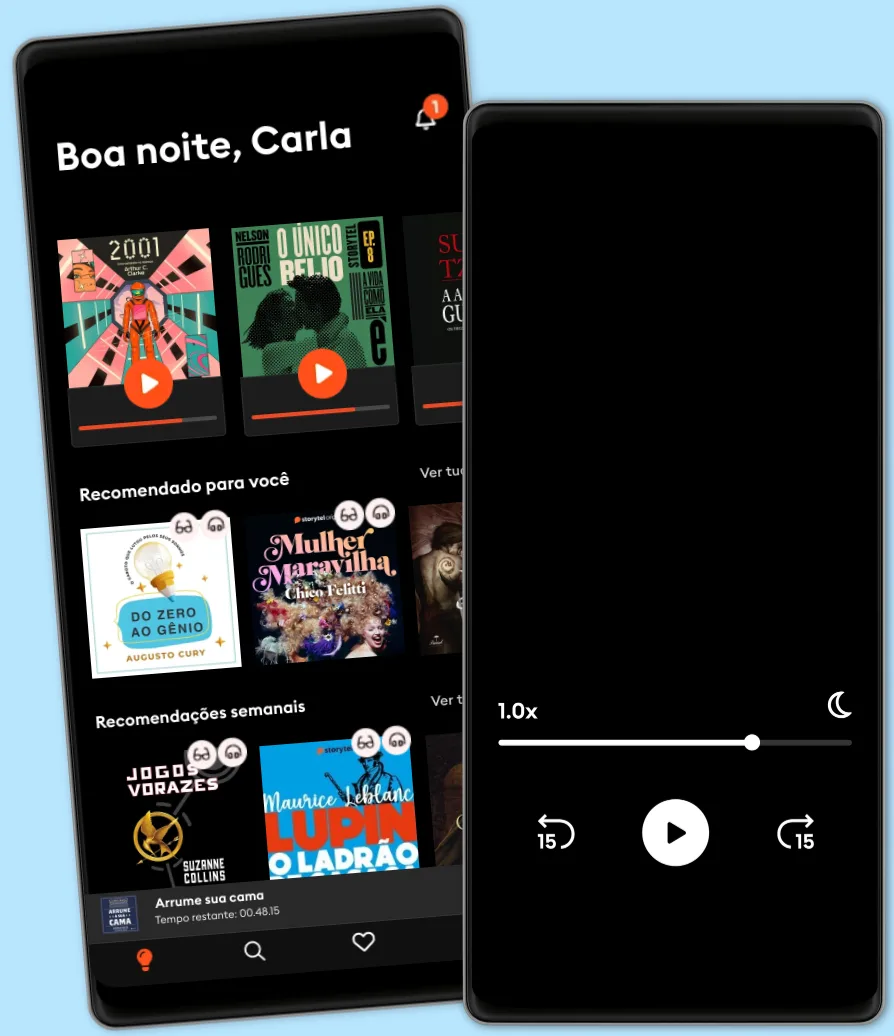

Ouça e leia

Entre em um mundo infinito de histórias

- Ler e ouvir tanto quanto você quiser

- Com mais de 500.000 títulos

- Títulos exclusivos + Storytel Originals

- 7 dias de teste gratuito, depois R$19,90/mês

- Fácil de cancelar a qualquer momento

Mastering Transformers: Build state-of-the-art models from scratch with advanced natural language processing techniques

- Idioma

- Inglês

- Formato

- Categoria

Não-ficção

Transformer-based language models have dominated natural language processing (NLP) studies and have now become a new paradigm. With this book, you'll learn how to build various transformer-based NLP applications using the Python Transformers library. The book gives you an introduction to Transformers by showing you how to write your first hello-world program. You'll then learn how a tokenizer works and how to train your own tokenizer. As you advance, you'll explore the architecture of autoencoding models, such as BERT, and autoregressive models, such as GPT. You'll see how to train and fine-tune models for a variety of natural language understanding (NLU) and natural language generation (NLG) problems, including text classification, token classification, and text representation. This book also helps you to learn efficient models for challenging problems, such as long-context NLP tasks with limited computational capacity. You'll also work with multilingual and cross-lingual problems, optimize models by monitoring their performance, and discover how to deconstruct these models for interpretability and explainability. Finally, you'll be able to deploy your transformer models in a production environment. By the end of this NLP book, you'll have learned how to use Transformers to solve advanced NLP problems using advanced models.

© 2021 Packt Publishing (Ebook): 9781801078894

Data de lançamento

Ebook: 15 de setembro de 2021

Tags

Outros também usufruíram...

- Summary, Analysis, and Review of Peter Schweizer's Profiles in Corruption: Abuse of Power by America's Progressive Elite Start Publishing Notes

- Infrastructure Attack Strategies for Ethical Hacking Himanshu Sharma

- Summary, Analysis, and Review of Martha MacCallum's Unknown Valor: A Story of Family, Courage, and Sacrifice from Pearl Harbor to Iwo Jima Start Publishing Notes

- Modern API Design with gRPC Hitesh Pattanayak

- Summary, Analysis, and Review of Jessica Simpson's Open Book Start Publishing Notes

- Pratique o poder do "Eu posso" Bruno Gimenes

4.5

- 18 Maneiras De Ser Uma Pessoa Mais Interessante Tom Hope

4

- O sonho de um homem ridículo Fiódor Dostoiévski

4.7

- Gerencie suas emoções Augusto Cury

4.5

- 10 Maneiras de manter o foco James Fries

3.8

- Harry Potter e a Pedra Filosofal J.K. Rowling

4.9

- Os "nãos" que você não disse Patrícia Cândido

4.2

- A gente mira no amor e acerta na solidão Ana Suy

4.5

- A metamorfose Franz Kafka

4.4

- Mais esperto que o diabo: O mistério revelado da liberdade e do sucesso Napoleon Hill

4.7

- Jogos vorazes Suzanne Collins

4.8

- A arte da guerra Sun Tzu

4.6

- Primeiro eu tive que morrer Lorena Portela

4.3

- talvez a sua jornada agora seja só sobre você: crônicas Iandê Albuquerque

4.5

- Pare de Procrastinar: Supere a preguiça e conquiste seus objetivos Giovanni Rigters

4.3

Português

Brasil