The DailyThe New York Times

Large models on CPUs (Practical AI #221)

- Autor

- Osa

- 1692

- Avaldatud

- 2. mai 2023

- Kirjastaja

- 0 Hinnangud

- 0

- Osa

- 1692 of 2332

- Kestus

- 38 min

- Keel

- inglise

- Vorming

- Kategooria

- Teadmiskirjandus

Model sizes are crazy these days with billions and billions of parameters. As Mark Kurtz explains in this episode, this makes inference slow and expensive despite the fact that up to 90%+ of the parameters don't influence the outputs at all.

Mark helps us understand all of the practicalities and progress that is being made in model optimization and CPU inference, including the increasing opportunities to run LLMs and other Generative AI models on commodity hardware.

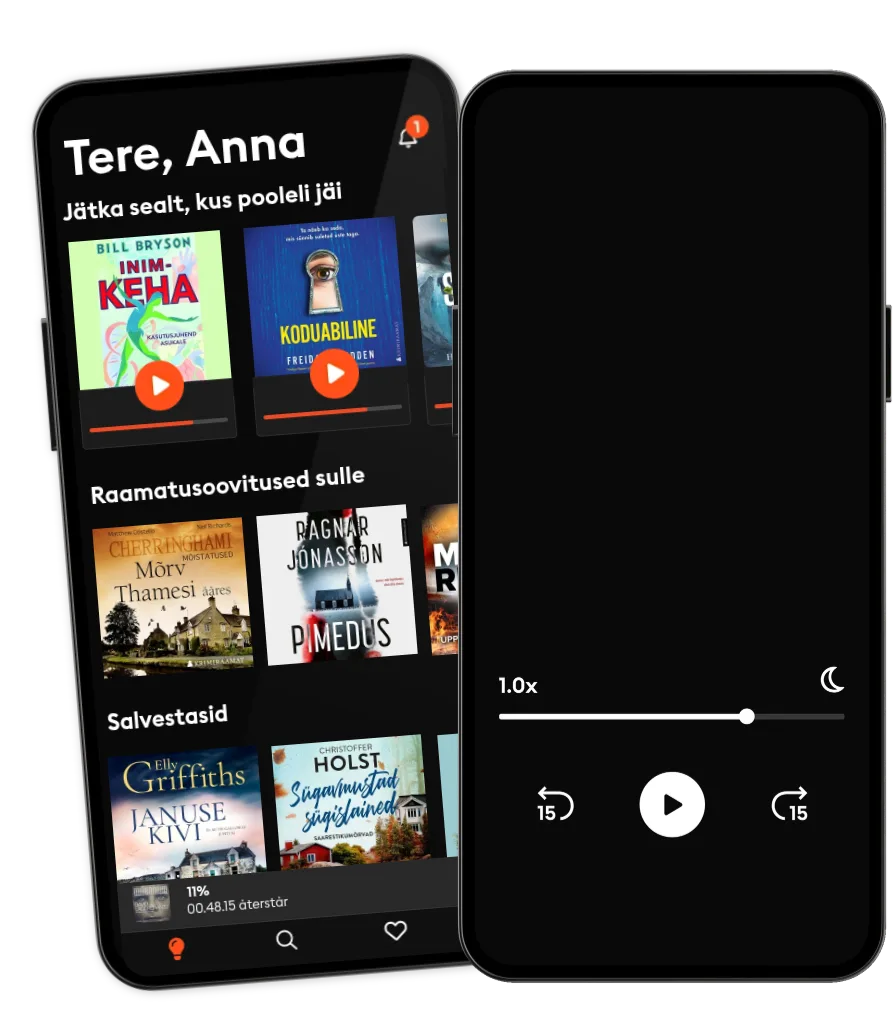

Loe ja kuula

Astu lugude lõputusse maailma

- Suurim valik eestikeelseid audio- ja e-raamatuid

- Proovi tasuta

- Loe ja kuula nii palju, kui soovid

- Lihtne igal ajal tühistada

Muud podcastid, mis võivad sulle meeldida ...

- The DailyThe New York Times

- This American LifeThis American Life

- The Witch Trials of J.K. RowlingThe Free Press

- The Book ReviewThe New York Times

- RadiocastRadiocast

- Anupama Chopra ReviewsFilm Companion

- Interviews with Anupama ChopraFilm Companion

- FC PopCornFilm Companion

- News and ViewsThe Quint

- Do I Like It?The Quint

Kasulikud lingid

Keel ja piirkond

Eesti

Eesti