DeepSparse for Efficient CPU Inference: The Complete Guide for Developers and Engineers

- 저자

- 출판사

- 언어학습

- 영어

- 형식

- 컬렉션

논픽션

"DeepSparse for Efficient CPU Inference"

"DeepSparse for Efficient CPU Inference" is a comprehensive and authoritative guide for engineers, researchers, and practitioners seeking to harness the full potential of sparse neural network models on modern CPU architectures. The book delivers a solid foundation in the theory and practice of model sparsification, detailing essential techniques such as structured and unstructured pruning, quantization, and hardware-aware design. Readers are guided through the intricate balance between model accuracy, computational performance, and resource utilization, with a particular emphasis on achieving efficient, scalable, and reliable inference.

The core of the book explores the DeepSparse Engine, an advanced execution framework purpose-built for high-performance sparse model inference on CPUs. Through clear explanations of the engine’s modular architecture, API layers, graph optimization techniques, and memory management innovations, readers gain actionable insight into deploying and optimizing sparse models. In-depth chapters cover integration with ONNX, custom operator development, low-latency real-time applications, NUMA optimizations, and the fine-tuning workflows necessary for robust, production-grade deployments. Best practices are complemented by rigorous methodologies for benchmarking, profiling, and automated performance assurance.

Enriched with real-world case studies in fields such as NLP, computer vision, healthcare, finance, and edge computing, the book offers practical strategies for deploying DeepSparse in both enterprise and distributed environments. Guidance on integrating with existing ML pipelines, ensuring security and compliance, and optimizing for cost and scalability makes this resource invaluable for organizations operating at scale. The concluding chapters illuminate future trends, ongoing research, and the expanding DeepSparse ecosystem, equipping readers with both the technical depth and the strategic perspective to stay ahead in the rapidly evolving field of efficient AI inference.

© 2025 NobleTrex Press (전자책): 6610000973590

출시일

전자책: 2025년 7월 24일

다른 사람들도 즐겼습니다 ...

- 생각편의점 김쾌대, 카이

- 쓸 만한 인간 박정민

- 신기한 맛 도깨비 식당 1 김용세, 김병선

- 사춘기 대 갱년기 제성은

- 생각하는 대로 그렇게 된다 제임스 앨런(James Allen)

- 사춘기 대 아빠 갱년기 제성은

- 무작정 쇼트트랙 이재영

- 신기한 맛 도깨비 식당 3 김용세, 김병선

- 신기한 맛 도깨비 식당 2 김용세, 김병선

- 용선생 처음 세계사1: 고대 문명~중세: 고대 문명~중세 김선혜, 정지윤, 노남희, 뭉선생, 윤효식, 이우일, 김선빈, 사회평론 역사연구소

- 죽이고 싶은 아이 이꽃님

- 수상한 기차역 박현숙

- 단톡방을 나갔습니다 2 신은영

- 수상한 지하실 박현숙

- 돈의 속성 김승호

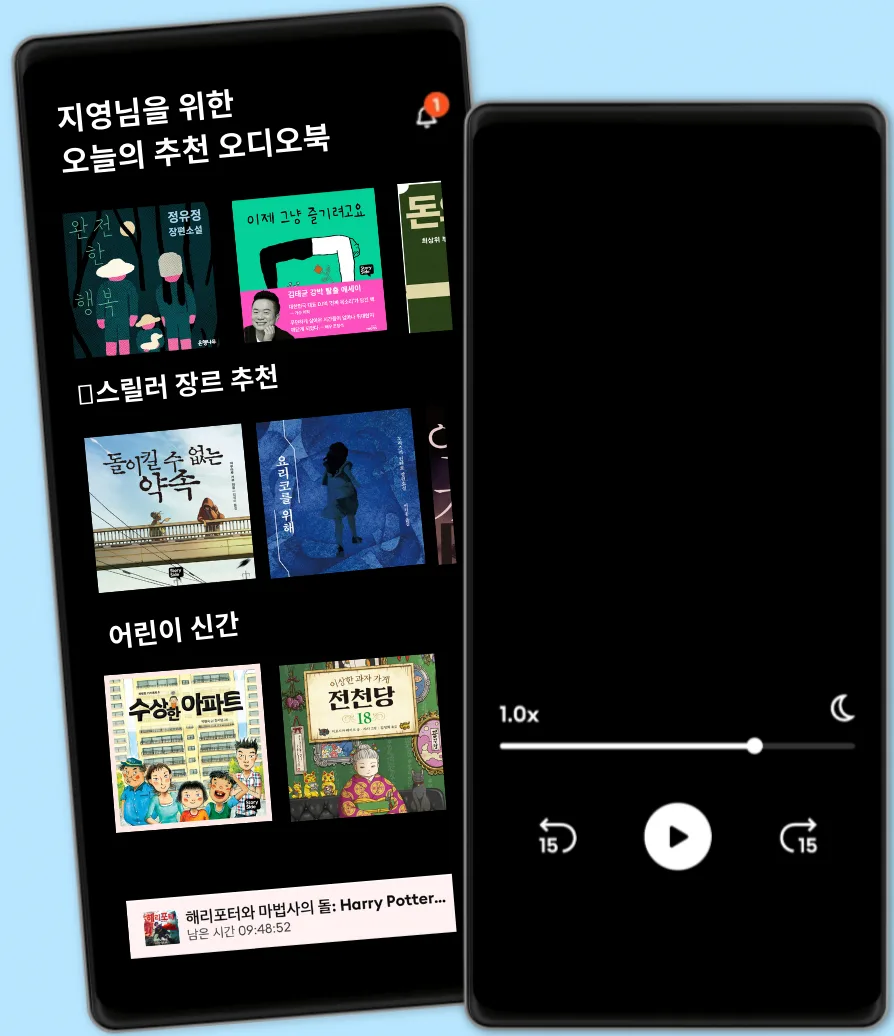

언제 어디서나 스토리텔

국내 유일 해리포터 시리즈 오디오북

5만권이상의 영어/한국어 오디오북

키즈 모드(어린이 안전 환경)

월정액 무제한 청취

언제든 취소 및 해지 가능

오프라인 액세스를 위한 도서 다운로드

스토리텔 언리미티드

5만권 이상의 영어, 한국어 오디오북을 무제한 들어보세요

13800 원 /월

사용자 1인

무제한 청취

언제든 해지하실 수 있어요

패밀리

친구 또는 가족과 함께 오디오북을 즐기고 싶은 분들을 위해

매달 21500 원 원 부터

2-3 계정

무제한 청취

언제든 해지하실 수 있어요

21500 원 /월