TensorRT Inference Optimization: The Complete Guide for Developers and Engineers

- Von

- Verlag:

- Sprache

- Englisch

- Format

- Kategorie

Sachbuch

"TensorRT Inference Optimization"

"TensorRT Inference Optimization" is the definitive guide to harnessing the full power of NVIDIA's TensorRT for high-performance deep learning inference. Beginning with a foundational overview of deep learning inference workflows, model format interoperability, and precision modes, the book systematically explores the architectural principles behind TensorRT and practical strategies for optimizing and deploying neural networks across a spectrum of hardware platforms—from data center GPUs to edge devices. Readers gain an in-depth understanding of model conversion processes, handling custom operators, and ensuring reliable and efficient model deployment in real-world production environments.

The book delves into advanced engine optimization techniques including graph-level transformations, memory management, calibration for lower-precision inference, and the integration of custom plugin layers through high-performance CUDA kernels. Comprehensive coverage is also given to deployment architectures, scalable serving solutions such as Triton, multi-GPU/multi-instance scaling, and robust practices for model versioning, security, and continuous integration/deployment. Profiling and benchmarking chapters provide hands-on methodologies for identifying bottlenecks and balancing throughput, latency, and accuracy, ensuring peak system performance.

Catering to both practitioners and researchers, the guide navigates state-of-the-art topics such as asynchronous multi-stream inference, distributed and federated deployment scenarios, post-training quantization, sequence model optimization, and automation within MLOps pipelines. Concluding with future trends, open challenges, and the expanding TensorRT community ecosystem, this volume is an essential resource for professionals seeking to maximize deep learning inference efficiency and drive innovation in AI-powered applications.

© 2025 HiTeX Press (E-Book): 6610001024444

Erscheinungsdatum

E-Book: 20. August 2025

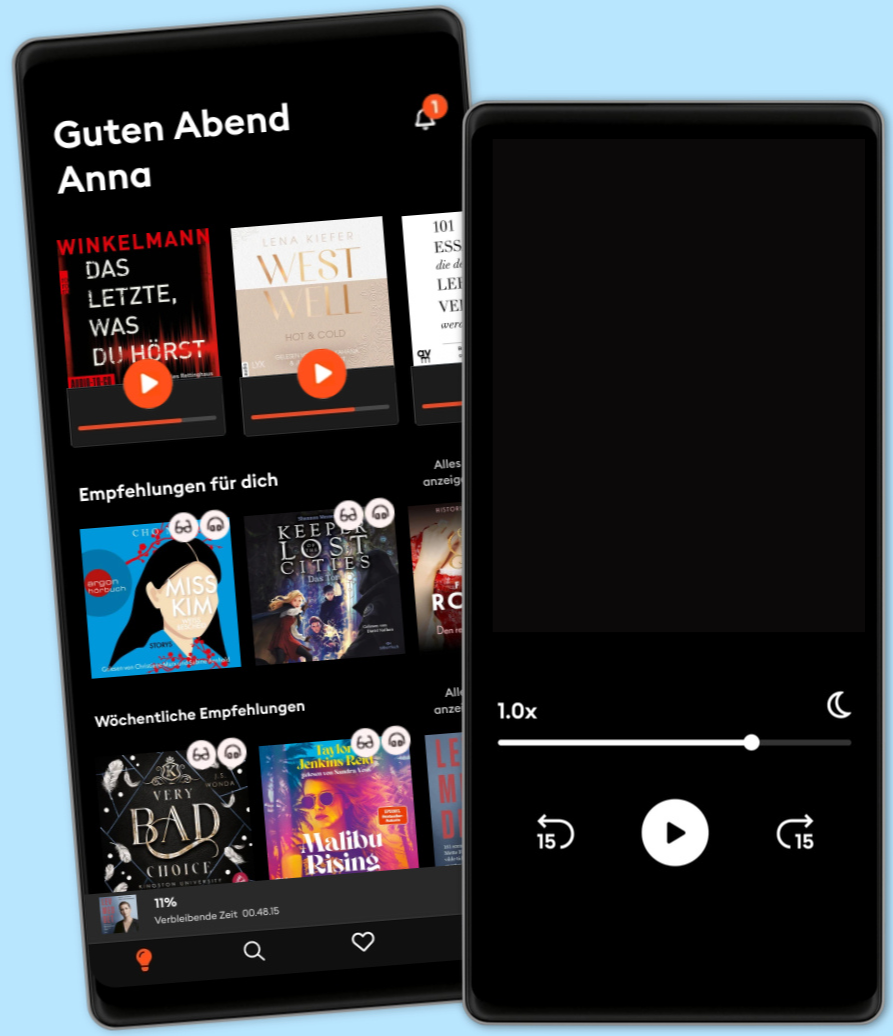

Wähle dein Abo-Modell

Über 600.000 Titel

Lade Titel herunter mit dem Offline Modus

Exklusive Titel und Storytel Originals

Sicher für Kinder (Kindermodus)

Einfach jederzeit kündbar

Basic

Für alle, die gelegentlich hören und lesen.

8.90 € /Monat

Jederzeit kündbar

Abo-Upgrade jederzeit möglich

Unlimited

Für alle, die unbegrenzt hören und lesen möchten.

18.90 € /Monat

Jederzeit kündbar

Wechsel zu Basic jederzeit möglich

Anderen gefällt...

- Fourth Wing – Flammengeküsst (Flammengeküsst-Reihe 1) Rebecca Yarros

- Iron Flame – Flammengeküsst (Flammengeküsst-Reihe 2): Die heißersehnte Fortsetzung des Fantasy-Erfolgs »Fourth Wing« Rebecca Yarros

- Lights Out (Lights Out 1): Heiße Dark RomCom für Leser:innen von Brynne Weaver endlich auf Deutsch! (Aly & Josh) Navessa Allen

- Die Känguru-Rebellion (Die Känguru-Werke 5) Marc-Uwe Kling

- Smaragdgrün - Liebe geht durch alle Zeiten - Liebe geht durch alle Zeiten. Die Edelstein-Trilogie, Band 3 (Ungekürzte Lesung) Kerstin Gier

- Alchemised: Das internationale Phänomen – jetzt auch als Hörbuch SenLinYu

- The Pumpkin Spice Latte Disaster (Lower Whilby 1): In dieser cosy RomCom treffen Stars Hollow-Vibes auf die Enemies to lover-Trope Kyra Groh

- Onyx Storm – Flammengeküsst (Flammengeküsst-Reihe 3): Die heißersehnte Fortsetzung von »Fourth Wing« und »Iron Flame« Rebecca Yarros

- The Deal – Reine Verhandlungssache: Off-Campus 1 | Roman | BookTok-Liebling | Prickelnde College-Romance für New Adults Elle Kennedy

- Buch der Engel (Extended Version) Marah Woolf

- This could be forever - Hawaii Love, Band 3 (Ungekürzte Lesung) Lilly Lucas

- Rückkehr der Engel (Extended Version) Marah Woolf

- Holiday Ever After (Ungekürzt) Hannah Grace

- Die Känguru-Chroniken (Die Känguru-Werke 1): Live und ungekürzt Marc-Uwe Kling

- Zorn der Engel (Extended Version) Marah Woolf