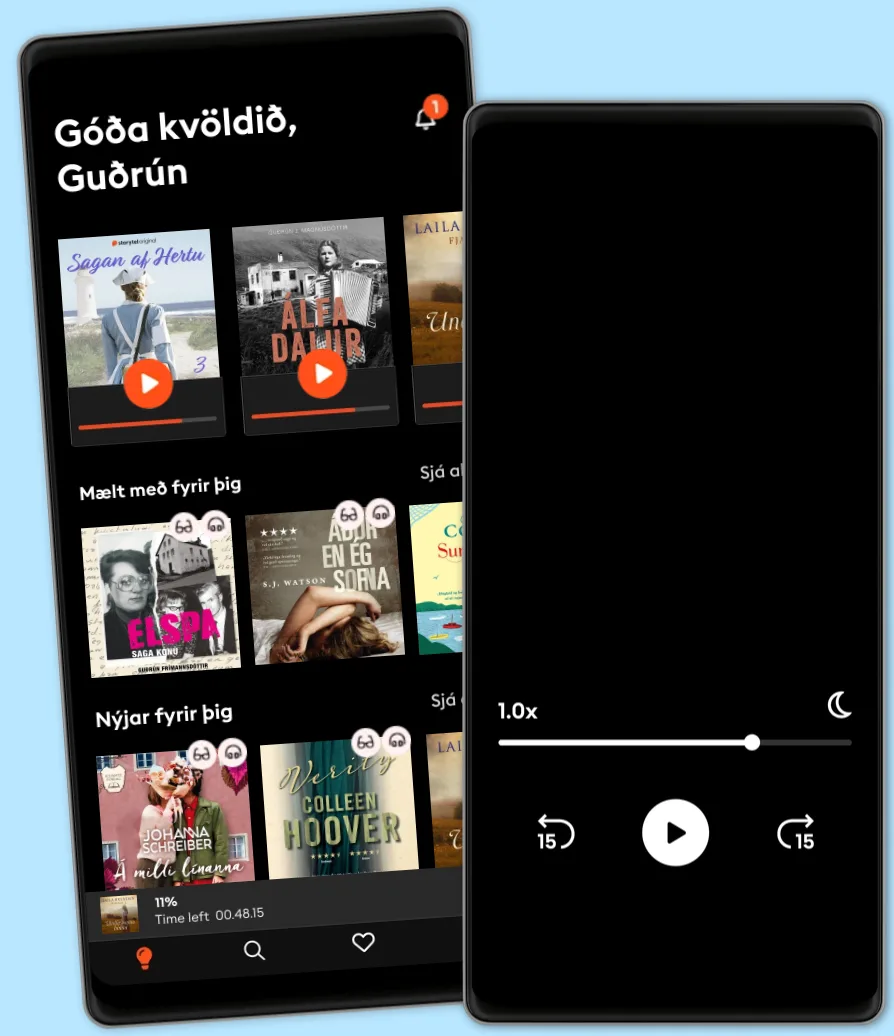

Hlustaðu og lestu

Stígðu inn í heim af óteljandi sögum

- Lestu og hlustaðu eins mikið og þú vilt

- Þúsundir titla

- Getur sagt upp hvenær sem er

- Engin skuldbinding

Bootstrapping Language-Image Pretraining: The Complete Guide for Developers and Engineers

- Eftir

- Útgefandi

- Tungumál

- enska

- Snið

- Bókaflokkur

Óskáldað efni

"Bootstrapping Language-Image Pretraining"

"Bootstrapping Language-Image Pretraining" is a comprehensive guide to the cutting-edge field of multimodal AI, offering an in-depth exploration of how models learn from both language and visual data. The book begins with a strong conceptual foundation, delving into the key principles that distinguish multimodal pretraining from traditional, unimodal approaches. It offers a rigorous examination of joint representation learning, architectural paradigms—such as alignment versus fusion—and the critical bottlenecks that underpin robust vision-language models. Readers are introduced to influential early models, benchmark datasets, and the practical challenges involved in handling rich, heterogeneous data.

In subsequent chapters, the book surveys the architectural building blocks powering today’s most advanced systems, from vision and text encoders to sophisticated cross-modal attention mechanisms and scalable fusion strategies. Detailed attention is given to the principles and practices of self-supervised learning and bootstrapping, including innovative data augmentation techniques, curriculum learning, and mechanism for leveraging weak supervision at scale. Methods for contrastive and generative pretraining are thoroughly analyzed, along with the multi-objective loss functions and large-scale distributed optimization that enable modern models to learn rich and transferable representations from massive, noisy datasets.

Recognizing the real-world impact of such technologies, the volume dedicates essential chapters to the responsible deployment of multimodal AI. It presents practical strategies to mitigate bias, bolster model robustness, and promote transparency and fairness across modalities. The book closes with an authoritative survey of evaluation protocols and emerging research frontiers, including instruction tuning, multilingual pretraining, and privacy-preserving approaches. "Bootstrapping Language-Image Pretraining" serves as an essential resource for researchers and practitioners seeking both a foundational understanding and a forward-looking roadmap in the pursuit of next-generation vision-language intelligence.

© 2025 HiTeX Press (Rafbók): 6610000964604

Útgáfudagur

Rafbók: 11 juli 2025

Merki

- Sálarstríð Steindór Ívarsson

4.6

- Hennar hinsta stund Carla Kovach

4.1

- Skilnaðurinn Moa Herngren

4

- Fiðrildaherbergið Lucinda Riley

4.4

- Hvarfið Torill Thorup

4.4

- Kvöldið sem hún hvarf Eva Björg Ægisdóttir

4.3

- Dökkir skuggar Laila Brenden

4.4

- Franska sveitabýlið Jo Thomas

4

- Myrká Arnaldur Indriðason

4.4

- Hildur Satu Rämö

4.3

- Vinkonur að eilífu? Sarah Morgan

4.1

- Litla leynivíkin í Króatíu Julie Caplin

4.2

- Aldrei aldrei Colleen Hoover

3.1

- Ég læt sem ég sofi Yrsa Sigurðardóttir

4.1

- Fjölskyldudeilan Torill Thorup

4.4

Veldu áskrift

Yfir 900.000 hljóð- og rafbækur

Yfir 400 titlar frá Storytel Original

Barnvænt viðmót með Kids Mode

Vistaðu bækurnar fyrir ferðalögin

Unlimited

Besti valkosturinn fyrir einn notanda

3290 kr /mánuði

Yfir 900.000 hljóð- og rafbækur

Engin skuldbinding

Getur sagt upp hvenær sem er

Family

Fyrir þau sem vilja deila sögum með fjölskyldu og vinum.

Byrjar á 3990 kr /mánuður

Yfir 900.000 hljóð- og rafbækur

Engin skuldbinding

Getur sagt upp hvenær sem er

3990 kr /mánuði

Íslenska

Ísland