Stærke portrætterALT for damerne

Large models on CPUs (Practical AI #221)

- Höfundur

- Episode

- 1692

- Published

- 2 maj 2023

- Útgefandi

- 0 Umsagnir

- 0

- Episode

- 1692 of 2328

- Lengd

- 38Mín.

- Tungumál

- enska

- Gerð

- Flokkur

- Óskáldað efni

Model sizes are crazy these days with billions and billions of parameters. As Mark Kurtz explains in this episode, this makes inference slow and expensive despite the fact that up to 90%+ of the parameters don't influence the outputs at all.

Mark helps us understand all of the practicalities and progress that is being made in model optimization and CPU inference, including the increasing opportunities to run LLMs and other Generative AI models on commodity hardware.

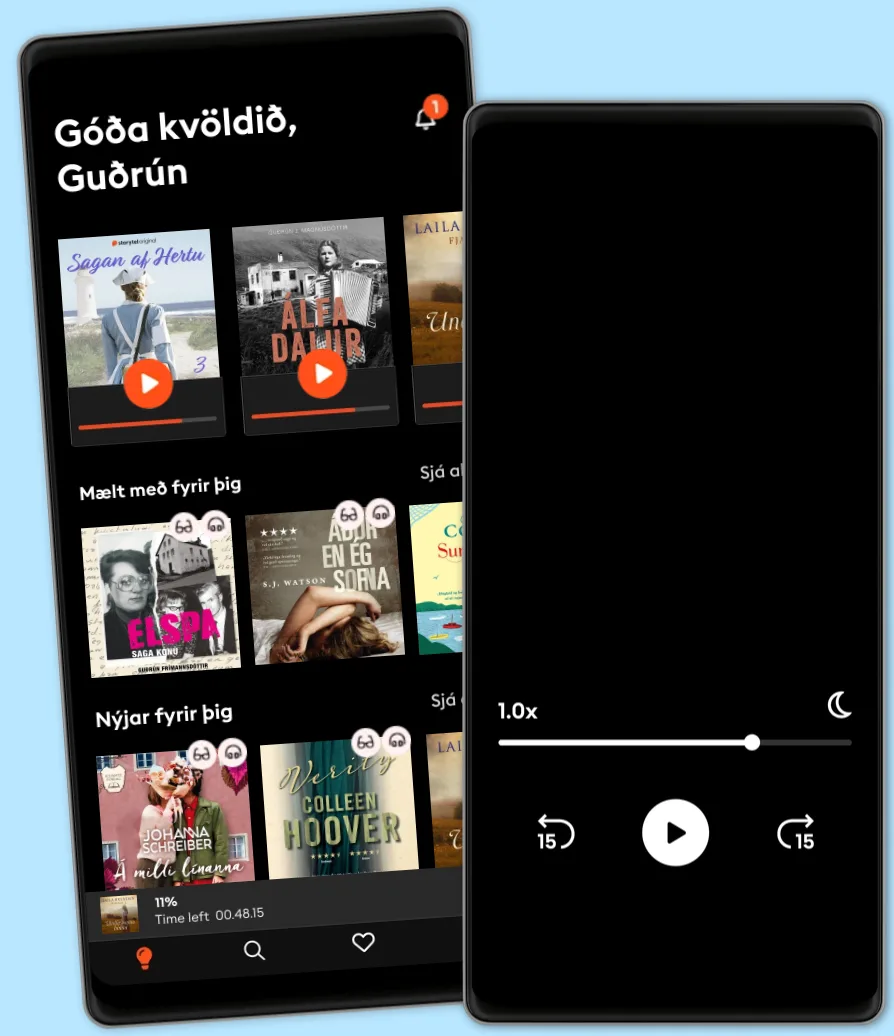

Hlustaðu og lestu

Stígðu inn í heim af óteljandi sögum

- Lestu og hlustaðu eins mikið og þú vilt

- Þúsundir titla

- Getur sagt upp hvenær sem er

- Engin skuldbinding

Other podcasts you might like ...

- Stærke portrætterALT for damerne

- ALT for damerne podcastALT for damerne

- Anupama Chopra ReviewsFilm Companion

- Interviews with Anupama ChopraFilm Companion

- FC PopCornFilm Companion

- Spill the Tea with SnehaFilm Companion

- BodenfalletGabriella Lahti

- Dirty JaneJohn Mork

- DiskoteksbrandenAntonio de la Cruz

- EgyptenaffärenJens Nielsen

Gagnlegir hlekkir

Tungumál og land

Íslenska

Ísland