Still Online - La nostra eredità digitaleBeatrice Petrella

Large models on CPUs (Practical AI #221)

- Av

- Episod

- 1692

- Publicerad

- 2 maj 2023

- Förlag

- 0 Recensioner

- 0

- Episod

- 1692 of 2328

- Längd

- 38min

- Språk

- Engelska

- Format

- Kategori

- Fakta

Model sizes are crazy these days with billions and billions of parameters. As Mark Kurtz explains in this episode, this makes inference slow and expensive despite the fact that up to 90%+ of the parameters don't influence the outputs at all.

Mark helps us understand all of the practicalities and progress that is being made in model optimization and CPU inference, including the increasing opportunities to run LLMs and other Generative AI models on commodity hardware.

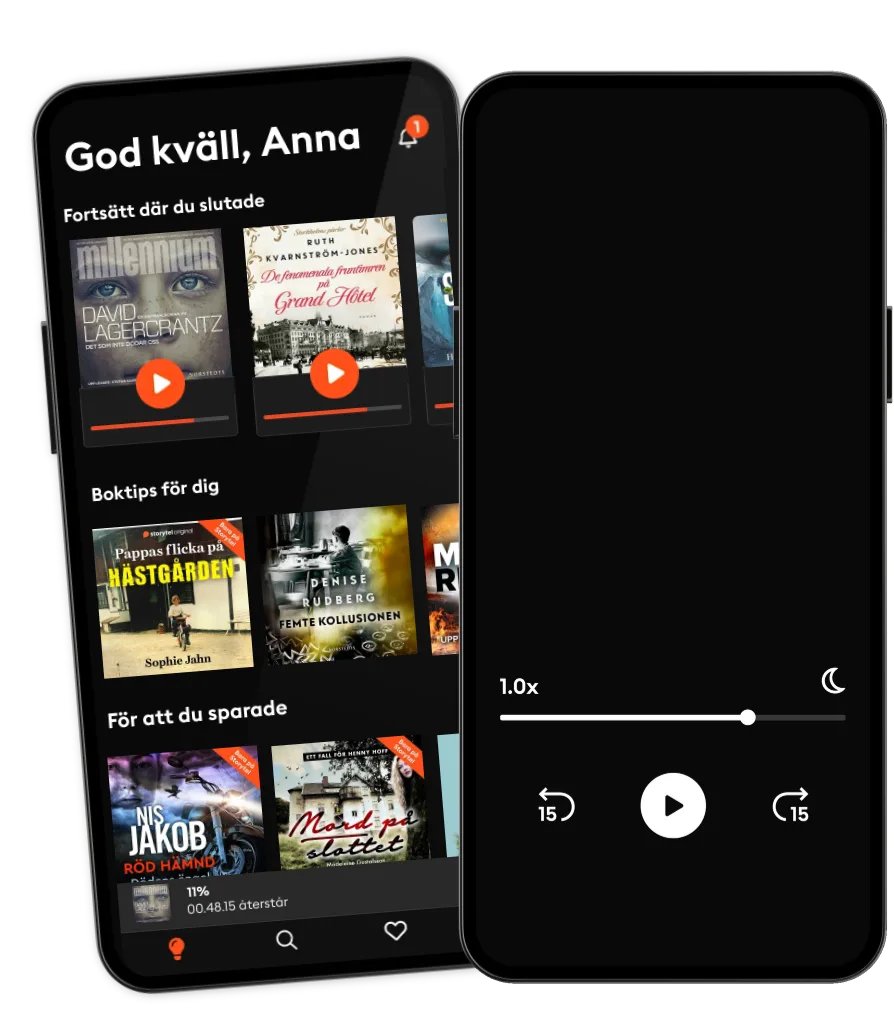

Lyssna när som helst, var som helst

Kliv in i en oändlig värld av stories

- 1 miljon stories

- Hundratals nya stories varje vecka

- Få tillgång till exklusivt innehåll

- Avsluta när du vill

Andra podcasts som du kanske gillar...

- Still Online - La nostra eredità digitaleBeatrice Petrella

- This American LifeThis American Life

- Skåret i stykkerB.T.

- Følg med migKristoffer Lind

- Anupama Chopra ReviewsFilm Companion

- FC PopCornFilm Companion

- Do I Like It?The Quint

- POTUS: ClintonlandAnders Agner Pedersen

- På sporet af lykkenNeal Ashley Conrad Thing

- Sidste nedtællingSteffen Jacobsen

Läs mer

Användbara länkar

Språk och region

Svenska

Sverige