TensorRT Inference Optimization: The Complete Guide for Developers and Engineers

- Oleh

- Penerbit

- Bahasa

- Inggris

- Format

- Kategori

Non Fiksi

"TensorRT Inference Optimization"

"TensorRT Inference Optimization" is the definitive guide to harnessing the full power of NVIDIA's TensorRT for high-performance deep learning inference. Beginning with a foundational overview of deep learning inference workflows, model format interoperability, and precision modes, the book systematically explores the architectural principles behind TensorRT and practical strategies for optimizing and deploying neural networks across a spectrum of hardware platforms—from data center GPUs to edge devices. Readers gain an in-depth understanding of model conversion processes, handling custom operators, and ensuring reliable and efficient model deployment in real-world production environments.

The book delves into advanced engine optimization techniques including graph-level transformations, memory management, calibration for lower-precision inference, and the integration of custom plugin layers through high-performance CUDA kernels. Comprehensive coverage is also given to deployment architectures, scalable serving solutions such as Triton, multi-GPU/multi-instance scaling, and robust practices for model versioning, security, and continuous integration/deployment. Profiling and benchmarking chapters provide hands-on methodologies for identifying bottlenecks and balancing throughput, latency, and accuracy, ensuring peak system performance.

Catering to both practitioners and researchers, the guide navigates state-of-the-art topics such as asynchronous multi-stream inference, distributed and federated deployment scenarios, post-training quantization, sequence model optimization, and automation within MLOps pipelines. Concluding with future trends, open challenges, and the expanding TensorRT community ecosystem, this volume is an essential resource for professionals seeking to maximize deep learning inference efficiency and drive innovation in AI-powered applications.

© 2025 HiTeX Press (E-book): 6610001024444

Tanggal rilis

E-book: 20 Agustus 2025

Yang lain juga menikmati...

- 8 Intisari Kecerdasan Finansial Indra Noveldy

- 77. Filosofi Teras - Pengantar untuk Belajar Filosofi Stoa, Tapi ... Aditya Hadi - PODLUCK

- 83. Atomic Habits - Cara Bangun Kebiasaan Baik Sedikit Demi Sedikit Aditya Hadi - PODLUCK

- Hujan Tere Liye

- Bumi Tere Liye

- Ronggeng Dukuh Paruk Ahmad Tohari

- Resign! Almira Bastari

- Sang Alkemis Paulo Coelho

- Dua Dini Hari Chandra Bientang

- Rumah Lebah Ruwi Meita

- A Court of Mist and Fury (1 of 2) [Dramatized Adaptation]: A Court of Thorns and Roses 2 Sarah J. Maas

- Terusir Buya Hamka

- Heated Rivalry Rachel Reid

- HOW TO WIN FRIENDS & INFLUENCE PEOPLE: Enriched edition. Dale Carnegie

- Filosofi Kopi: Kumpulan Cerita dan Prosa Satu Dekade Dee Lestari

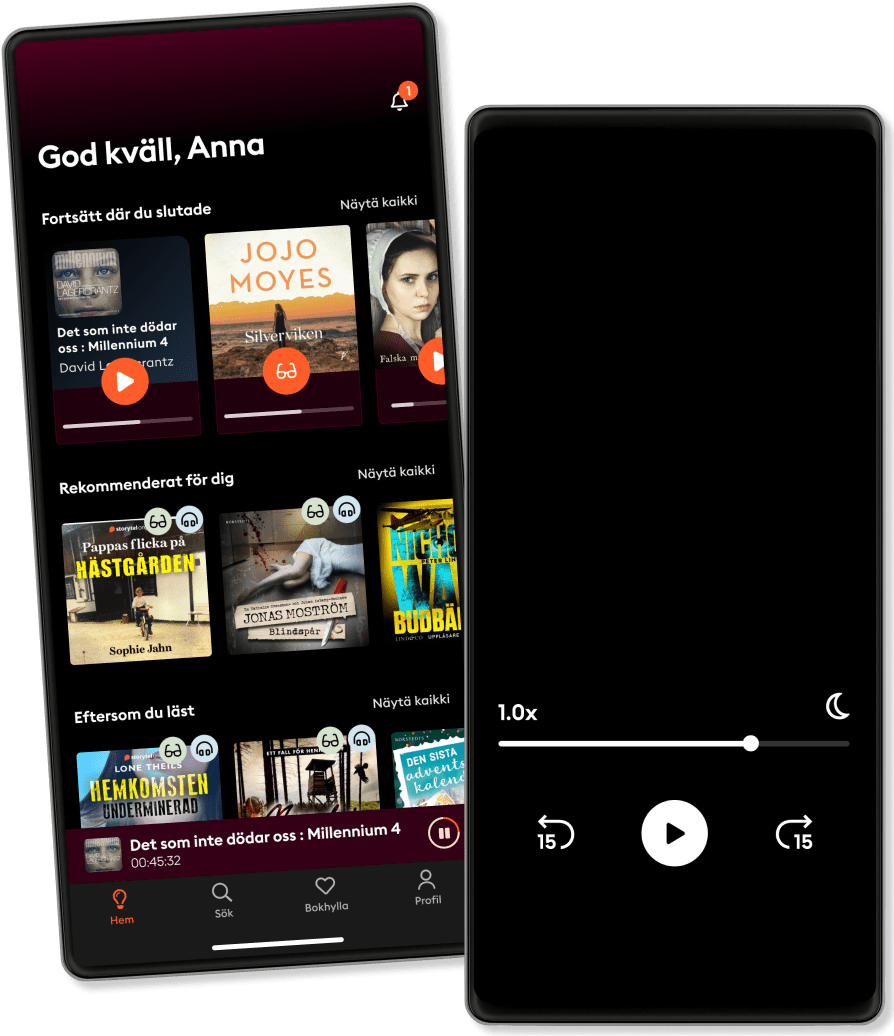

Selalu dengan Storytel

Lebih dari 900.000 judul

Mode Anak (lingkungan aman untuk anak)

Unduh buku untuk akses offline

Batalkan kapan saja

Premium

Bagi yang ingin mendengarkan dan membaca tanpa batas.

Rp39000 /bulan

Akses bulanan tanpa batas

Batalkan kapan saja

Judul dalam bahasa Inggris dan Indonesia

Premium 6 bulan

Bagi yang ingin mendengarkan dan membaca tanpa batas

Rp189000 /6 bulan

Akses bulanan tanpa batas

Batalkan kapan saja

Judul dalam bahasa Inggris dan Indonesia

Local

Bagi yang hanya ingin mendengarkan dan membaca dalam bahasa lokal.

Rp19900 /bulan

Akses tidak terbatas

Batalkan kapan saja

Judul dalam bahasa Indonesia

Local 6 bulan

Bagi yang hanya ingin mendengarkan dan membaca dalam bahasa lokal.

Rp89000 /6 bulan

Akses tidak terbatas

Batalkan kapan saja

Judul dalam bahasa Indonesia