TensorRT Inference Optimization: The Complete Guide for Developers and Engineers

- Höfundur

- Útgefandi

- Tungumál

- enska

- Gerð

- Flokkur

Óskáldað efni

"TensorRT Inference Optimization"

"TensorRT Inference Optimization" is the definitive guide to harnessing the full power of NVIDIA's TensorRT for high-performance deep learning inference. Beginning with a foundational overview of deep learning inference workflows, model format interoperability, and precision modes, the book systematically explores the architectural principles behind TensorRT and practical strategies for optimizing and deploying neural networks across a spectrum of hardware platforms—from data center GPUs to edge devices. Readers gain an in-depth understanding of model conversion processes, handling custom operators, and ensuring reliable and efficient model deployment in real-world production environments.

The book delves into advanced engine optimization techniques including graph-level transformations, memory management, calibration for lower-precision inference, and the integration of custom plugin layers through high-performance CUDA kernels. Comprehensive coverage is also given to deployment architectures, scalable serving solutions such as Triton, multi-GPU/multi-instance scaling, and robust practices for model versioning, security, and continuous integration/deployment. Profiling and benchmarking chapters provide hands-on methodologies for identifying bottlenecks and balancing throughput, latency, and accuracy, ensuring peak system performance.

Catering to both practitioners and researchers, the guide navigates state-of-the-art topics such as asynchronous multi-stream inference, distributed and federated deployment scenarios, post-training quantization, sequence model optimization, and automation within MLOps pipelines. Concluding with future trends, open challenges, and the expanding TensorRT community ecosystem, this volume is an essential resource for professionals seeking to maximize deep learning inference efficiency and drive innovation in AI-powered applications.

© 2025 HiTeX Press (Rafbók): 6610001024444

Útgáfudagur

Rafbók: 20 augusti 2025

Aðrir höfðu einnig áhuga á...

- Hulda Ragnar Jónasson

- Sé eftir þér Colleen Hoover

- Hin helga kvöl Stefán Máni

- Hildur Satu Rämö

- Hið ósagða - Ættarsaga Torill Thorup

- Örlagadansinn Birgitta H. Halldórsdóttir

- Fjórar árstíðir. Sjálfsævisaga Reynir Finndal Grétarsson

- Lára fer í sund Birgitta Haukdal

- Þernan: Undir yfirborðinu Freida McFadden

- Ókunn fortíð - Ættarsaga Torill Thorup

- 18 rauðar rósir Unnur Lilja Aradóttir

- Englatréð Lucinda Riley

- Kókaín í konungsríki Øistein Monsen, Torgeir Krokfjord

- Dansað við djöful Birgitta H. Halldórsdóttir

- Dagbók Kidda klaufa #19 - Sull og bull Jeff Kinney

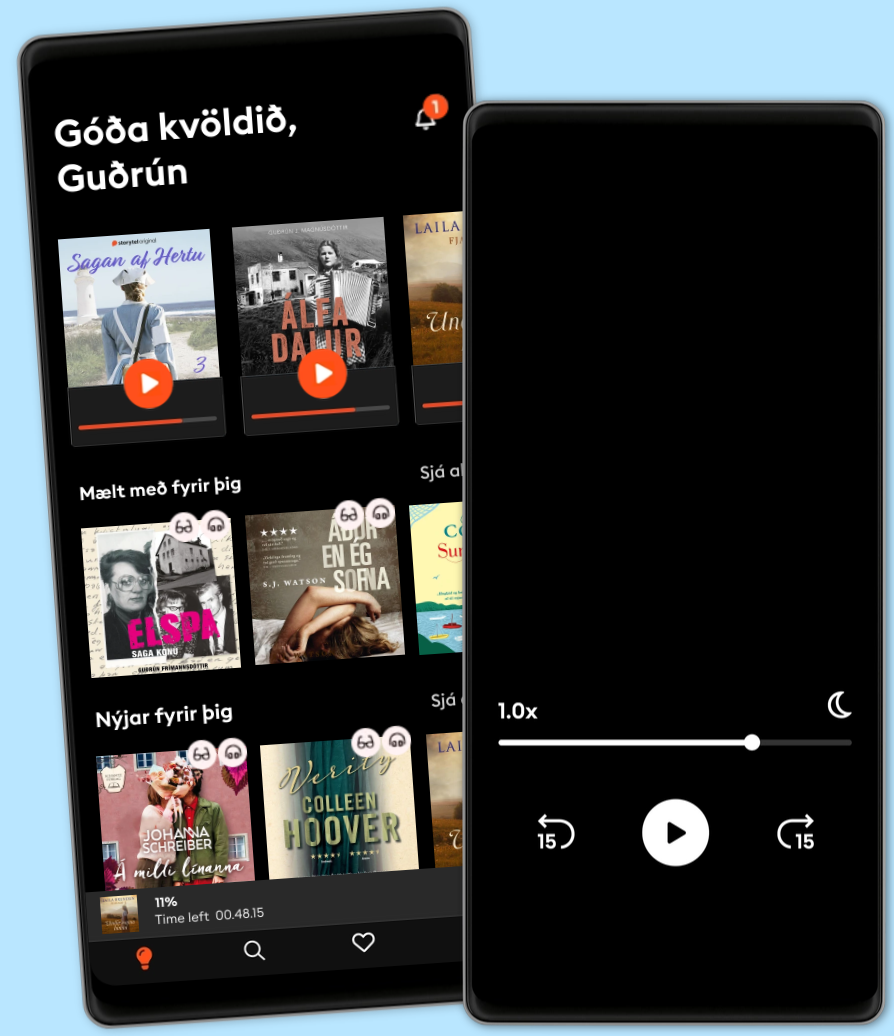

Veldu áskrift

1 milljón hljóð- og rafbækur

Barnvænt viðmót með Kids Mode

Hlustaðu og lestu á sama tíma

Vistaðu bækurnar fyrir ferðalögin

Unlimited

Besti valkosturinn fyrir einn notanda

$3290 kr á mánuði

1 milljón hljóð- og rafbækur

Engin skuldbinding

Getur sagt upp hvenær sem er

Family

Fyrir þau sem vilja deila sögum með fjölskyldu og vinum.

Frá $3990 kr á mánuði

1 milljón hljóð- og rafbækur

Engin skuldbinding

Getur sagt upp hvenær sem er

$3990 kr á mánuði